How AI Crawlers Like GPTBot and CCBot Actually Scrape Your Site: A Technical Analysis

How AI Crawlers Like GPTBot and CCBot Actually Scrape Your Site: A Technical Analysis

AI crawlers like GPTBot and CCBot now crawl AI-powered websites at unprecedented volumes. GPTBot alone generated 569 million requests in major networks in a single month . Around 30% of global web traffic today comes from bots, and GPTBot's share surged from 5% to 30% between May 2024 and May 2025 . These data crawlers consume substantial bandwidth. Mid-sized websites face 138 GB in monthly traffic and $1,380 in costs . We'll get into how OpenAI bots and other AI web crawlers technically locate and scrape your content, analyze their request patterns, and explore defense mechanisms including OpenAI robots txt configurations.

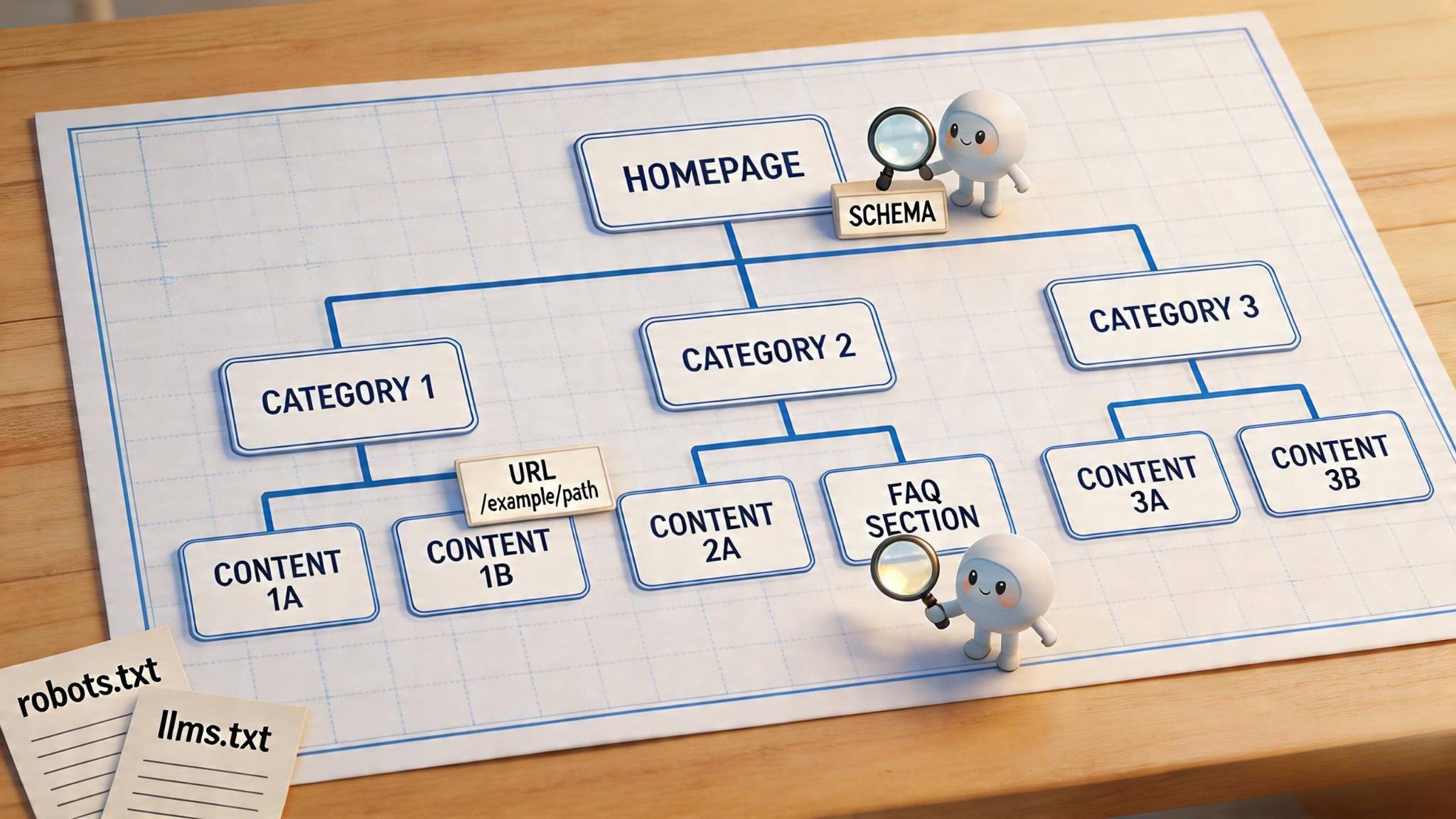

How AI Crawlers Locate and Access Your Website

Before any crawler can scrape your content, it must translate your domain name into a machine-readable IP address through DNS resolution. A data crawler queries a DNS resolver to convert your domain into its corresponding IP address at the time it makes contact with your site. This translation happens through a coordinated sequence across multiple server types. The DNS resolver contacts root nameservers, which direct it to the appropriate top-level domain server based on your extension. The TLD server then refers the resolver to your authoritative nameserver, which holds the actual DNS records that map your domain to its IP address.

DNS Resolution and Original Connection

DNS resolution represents a bottleneck for AI web crawler operations. DNS requests take between 10ms and 200ms to complete due to the synchronous nature of DNS interfaces 1. Other threads remain blocked until that first request completes once a crawler thread makes a DNS request. OpenAI crawlers and other AI bots address this limitation by maintaining DNS cache systems that store domain-to-IP mappings. Automated jobs update these caches on a regular basis and prevent repeated DNS lookups for domains accessed often. The entire resolution process completes in under 100 milliseconds 2, which makes it imperceptible during normal operations but significant at crawler scale.

Therefore, GPT crawler systems and similar bots cache DNS information in an aggressive manner. Your browser cache, operating system cache, and the resolver's own cache create multiple layers that reduce resolution time for sites you visit on a regular basis. Popular DNS resolvers deliver faster performance since they maintain larger caches filled with domains requested often. Every DNS record has a TTL value that determines cache duration, which affects how fast crawlers can reconnect to your site after DNS changes.

Entry Point Discovery Methods

Crawlers use seed URLs as their access points. To name just one example, a crawler might start with the institution's domain name as the seed URL to crawl an entire university website 1. The system expands outward by following discovered links from there. These seed URLs come from multiple sources: XML sitemaps, pages indexed before, or lists specified by hand 1. OpenAI bots can find your site through their public DNS service that observes queries, through analytics data if installed, or through browser extensions that report visited URLs.

The crawl frontier functions as a queue that stores URLs the crawler has discovered but not yet downloaded 1. This data structure operates on a First-in-First-out principle, though modern crawlers apply prioritization logic. A scheduler manages this queue and decides which URLs to visit next based on page importance, estimated content freshness, and domain limits 1. Homepages and high-authority pages receive priority over pages nested deep in the site. Sites with frequent updates get crawled more often than static documentation.

Robots.txt serves dual purposes for controlling OpenAI crawlers and other AI bots in 2026 3. The file manages both traditional search engine access and AI training bot permissions. OpenAI robots txt configurations now determine whether your content becomes eligible for AI model training datasets versus answer generation in real time.

Crawl Budget Allocation Logic

Crawl budget defines the set of URLs that a crawler can and wants to access on your site. Google's system calculates this through two elements: crawl capacity limit and crawl demand 4. The capacity limit represents the maximum simultaneous parallel connections a crawler can use without overwhelming your servers. Google's crawlers calculate this limit to provide complete coverage of important content while preventing server overload.

Crawl demand varies by crawler type and purpose. AdsBot maintains higher demand when sites run dynamic ad targets, while product crawlers increase activity based on merchant feed contents 4. For AI crawlers, demand depends on content quality and training value rather than update frequency alone. AI systems prefer fewer but higher-quality pages 5. They deprioritize noisy URLs faster and rely on crawl consistency. OpenAI bots may avoid using your content as a source if it appears irregularly or shows instability.

Sites with many duplicate URLs or unwanted pages waste crawler resources 4. Crawlers spend time on low-value pages when the inventory you see has substantial duplicate content. Low demand reduces crawl frequency even if crawl capacity limits aren't reached. Pages that load fast with clear link structures get crawled more efficiently than slow, poorly organized sites 1. Server response time affects crawl patterns in a direct way, as slow or unstable servers trigger automatic frequency reductions.

The Technical Scraping Process: Step-by-Step Breakdown

A crawler establishes connection to your server and constructs an HTTP GET request to retrieve page content. This request contains headers that specify the accepted content type, typically 'text/html' for web pages. OpenAI bots and other AI web crawlers identify themselves through User-Agent strings in these headers. GPTBot gets distinguished from regular browser traffic this way. The crawler sends this request to your server's IP address and initiates the fundamental exchange that powers all web scraping operations.

Step 1: HTTP Request Initiation

The HTTP request functions as a structured message asking your server for specific resources. Programming libraries handle this construction automatically. Python's requests package simplifies the process by managing connection handling, header formation, and error catching. The request specifies the target URL, HTTP method (almost always GET for crawling), and additional headers that control cache behavior and compression priorities. Modern data crawler systems send thousands of these requests at once and distribute load across multiple threads to maximize throughput.

Step 2: Server Response Analysis

Your server responds with an HTTP status code, headers, and the requested content. A 200 status indicates successful retrieval. A 429 signals rate limiting that forces the crawler to reduce frequency. The response headers reveal content type, which determines how the crawler processes the payload. HTML responses get parsed directly. JSON responses from API endpoints provide structured data without HTML overhead. Single-page applications complicate this process by delivering minimal HTML initially and then loading content through subsequent JavaScript-driven API calls. Crawlers monitor network traffic and capture these secondary requests. They extract data directly from API responses rather than wait for DOM rendering.

Step 3: Content Parsing and Extraction

The raw HTML arrives as a long text string that requires transformation into navigable structure. Parsing libraries convert this string into a Document Object Model and create a searchable tree of elements. BeautifulSoup in Python and tools like it traverse this DOM tree using CSS selectors or XPath queries to locate specific data. You inspect a page's HTML and identify tags and classes wrapping your target content. The crawler copies this process and selects elements like span.titleline > a to extract article titles. Content gets cleaned during extraction by removing whitespace, special characters and formatting artifacts. This ensures usable data quality.

Modern websites using asynchronous rendering require JavaScript execution to populate content. Traditional HTML parsing fails here because the initial source lacks the needed data. Crawlers address this by rendering pages in headless browsers and wait for JavaScript to execute and populate the DOM. Network request monitoring offers an alternative approach. Crawlers intercept the exact API calls your site uses to fetch data instead of parsing rendered HTML. This method captures structured JSON payloads directly and bypasses fragile DOM selectors.

Step 4: Link Discovery and Queue Management

The crawler extracts content and identifies hyperlinks embedded in anchor tags, sitemaps, and canonical tags at the same time. Each discovered URL becomes a candidate for future crawling. These links get added to the request queue, a dynamic data structure that stores URLs awaiting download. The queue operates on prioritization logic rather than simple first-in-first-out ordering. URLs from high-authority pages enter the queue with elevated priority. This ensures important content gets crawled before obscure pages.

Queue management at scale requires deduplication to prevent crawling similar URLs repeatedly. Bloom filters offer space-efficient probabilistic checking. A crawler discovers a URL and queries the Bloom filter to determine if that URL was seen previously. False positives occur occasionally and cause new URLs to be skipped, but this tradeoff enables processing massive URL sets without exhaustive database lookups. Distributed crawlers share queues across multiple nodes and use consistent hashing to partition URLs. This prevents different crawlers from accessing similar pages at once.

Step 5: Data Storage and Indexing

Extracted content gets organized into formats optimized for retrieval and analysis. Search engines build inverted indexes that map words to pages containing them, alongside document stores that preserve original content and metadata. Common Crawl generates WARC files, a standardized archive format that captures HTTP transactions with full request and response data. Structured extraction outputs to JSON, CSV, or XML depending on downstream processing requirements. The storage layer implements cleaning algorithms that remove duplicates and errors before final persistence. This ensures data quality for AI training datasets or search indexes.

OpenAI Crawlers: GPTBot and ChatGPT-User Technical Behavior

OpenAI operates multiple distinct user agents. Each serves specific functions within its ecosystem. GPTBot crawls publicly available content to train generative AI foundation models, while ChatGPT-User executes actions triggered by user requests rather than autonomous scanning. A third agent, OAI-SearchBot, focuses on surfacing websites in ChatGPT's search features. Each setting operates independently and allows you to permit search indexing while blocking training data collection.

GPTBot Request Characteristics

GPTBot identifies itself through a specific user-agent string: Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; GPTBot/1.3; +https://openai.com/gptbot 6. This transparent identification enables server log analysis and targeted blocking. OpenAI publishes IP address ranges at https://openai.com/gptbot.json 6 and provides verifiable source validation beyond user-agent checking alone. GPTBot's market share among AI crawlers jumped from 4.7% to 11.7% between July 2024 and July 2025 7, showing increased training data demand.

The crawler applies content filtering during collection. It excludes pages requiring paywall access, sources collecting personally identifiable information, and text violating OpenAI's content policies automatically 6. This preprocessing occurs before data enters training pipelines, though the exact filtering algorithms remain proprietary. GPTBot remains the most blocked AI crawler, with 312 domains disallowing access as of June 2025 7. But 61 domains allow GPTBot access explicitly and recognize potential visibility benefits within ChatGPT outputs 7.

ChatGPT-User API-Based Crawling

ChatGPT-User operates differently from traditional web crawlers. The system may visit web pages using the ChatGPT-User agent when users ask ChatGPT a question or interact with Custom GPTs 6. The full user-agent string reads: Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ChatGPT-User/1.0; +https://openai.com/bot 6. Published IP addresses appear at https://openai.com/chatgpt-user.json 6.

OpenAI revised its ChatGPT-User documentation on December 9, 2025, and removed language showing robots.txt compliance 8. The updated documentation states explicitly: "Because these actions are initiated by a user, robots.txt rules may not apply" 6. This positions ChatGPT-User as a proxy for human browsing rather than autonomous crawling. Requests surged 2,825% between May 2024 and May 2025 and reached 1.3% of total crawler traffic 7. The policy change means traditional crawler control mechanisms no longer restrict user-initiated ChatGPT actions.

OpenAI robots.txt Compliance Mechanisms

Only OAI-SearchBot and GPTBot respect robots.txt directives following the December 2025 documentation update 8. Sites can allow one bot while blocking another. To name just one example, permitting OAI-SearchBot for search visibility while disallowing GPTBot prevents training data usage. Blocking OAI-SearchBot no longer excludes sites from ChatGPT search results through other pathways 8.

OpenAI may use results from a single crawl for both purposes when both bots receive permission to avoid duplicate requests 6. Systems require about 24 hours to adjust after robots.txt updates 6. This delay reflects cache propagation and crawl schedule coordination across distributed infrastructure.

Content Selection Algorithms

OpenAI crawlers prioritize quality over quantity. The systems prefer fewer high-quality pages and deprioritize noisy URLs faster than traditional search crawlers [see previous factual context]. Content appearing irregularly or showing instability gets avoided as a source. GPTBot checks for clear structure and consistent availability before incorporating material into training datasets. Sites with substantial duplicate content waste crawler resources and reduce future crawl frequency even when robots.txt permits access.

CCBot and Common Crawl's Data Collection Architecture

Common Crawl operates as a 501(c)(3) non-profit organization founded in 2007 with the goal of democratizing access to web information. The organization produces and maintains an open repository of web crawl data that anyone can access and analyze 2. The corpus contains petabytes of data collected since 2008 9. This open dataset makes shared work free across organizations, academia, and non-profits working together to address complex challenges like climate change and public health 2. More than 10,000 academic studies had cited Common Crawl by 2024 10, which shows its effect on research communities.

Open Dataset Purpose and Methodology

CCBot, the organization's web crawler, identifies itself via its UserAgent string as: CCBot/2.0 (https://commoncrawl.org/faq/) 2 1. The crawler runs on dedicated IP address ranges with reverse DNS. Webmasters can verify whether a logged request stems from the real CCBot 2 1. CCBot is based on Apache Nutch and obeys robots.txt directives while rate-limiting its requests to individual servers 4. The crawler supports both HTTP/1.1 and HTTP/2, the latter only over TLS (https://) 1.

CCBot uses Harmonic Centrality to prioritize URLs to crawl 4. This graph-theoretic measure shows a node's proximity to the structural core of the web. The metric is computed from Web Graph data and is used alongside PageRank 4. The crawler has multiple algorithms designed to prevent undue load on web servers to a given domain 1. It uses an adaptive back-off algorithm that slows down requests if your web server responds with HTTP 429 or 5xx status 1. The crawler waits a few seconds before sending the next request to the same site by default 1.

Crawl Frequency and Update Cycles

Crawls process about three billion pages per cycle 4. Common Crawl releases 3-5 billion pages per monthly crawl 5. The total historical archive contains 250+ billion pages 5. To name just one example, the August 2025 crawl added 2.42 billion pages and totaled over 419 TiB of data 11. Dataset sizes reach petabytes 5, stored on Amazon Web Services' Public Data Sets in the bucket s3://commoncrawl/, located in the US-East-1 (Northern Virginia) AWS Region 12. Access to the corpus hosted by Amazon is free 9.

Common Crawl organizes data into periodic crawls like CC-MAIN-2025-33 or CC-MAIN-2025-19 11. Each crawl corresponds to a time period. CC-MAIN-2025-33 represents the 33rd crawl of 2025 11.

WARC File Generation Process

The WARC (Web ARChive) format provides the raw data from the crawl and offers a direct mapping to the crawl process 4 13. WARC files store the HTTP response from contacted websites (WARC-Type: response), information about how that information was requested (WARC-Type: request), and metadata on the crawl process itself (WARC-Type: metadata) 4 13. The format is append-only and immutable once written 4.

WAT (Web Archive Transformation) files contain important metadata about records stored in WARC format 4. This metadata is computed to each record type and has HTTP headers and link graphs 4. WET (WARC Encapsulated Text) files contain plaintext content extracted from crawled HTML, suitable to NLP tasks 4. These files only contain extracted plaintext to tasks requiring solely textual information 13.

Measuring Crawler Activity on Your Site

Server access logs record every request made to your website and capture complete data on both human users and bot interactions 14. Each log entry contains six tab-delimited columns: timestamp, message level, crawler thread name, component name, module name, and message 15. This raw data provides an unfiltered view of how OpenAI bots, CCBot, and other AI web crawlers interact with your site 14.

Server Log Analysis Techniques

Your hosting panel often displays simple bot activity alongside normal traffic 16. Raw access logs through cPanel reveal bot visits beside human activity 16. Standard log files capture IP address, timestamp, requested URL, HTTP method, status code, and user-agent for each hit 17. You can get into logs manually by downloading data and parsing it in spreadsheets, or automate analysis via specialized solutions 18.

Four fields matter most: IP address to group hits and map to networks, user-agent to compare strings and flag odd combinations, timestamp to chart frequency and detect bursts, and status code to watch 429s and 403s 3. Correlation between these fields exposes stealth patterns over time 3.

Identifying Crawlers by User-Agent

Googlebot appears as Mozilla/5.0 (compatible; Googlebot/2.1; +https://www.google.com/bot.html) while GPTBot shows Mozilla/5.0 AppleWebKit/537.36 (compatible; GPTBot/1.0; +https://openai.com/gptbot) 17. User-agent strings alone prove unreliable because any malicious data crawler can modify this identifier 19. Anyone can impersonate ClaudeBot from their laptop by spoofing the user-agent string 20.

IP verification provides reliable confirmation. Check all IPs against ranges that crawler operators publish 20. Reverse DNS verification confirms whether logged requests stem from legitimate sources 21.

Request Volume Tracking

Set tight time windows and flag IPs that exceed your rate threshold 3. Chart spikes by path and group by user-agent 3. Real-life users wander through sites while bots sweep them 3. Sudden surges at night or off-peak hours, many 200 status codes followed by waves of 429/503, repeated hits on sitemaps and APIs, and uniform intervals between requests signal automated crawling 3.

Line graphs illustrate bot visit trends over time 14. A drastic drop in Googlebot visits may signal problems that require investigation, while spikes indicate code changes that prompt re-crawls 14.

Bandwidth Consumption Calculation

Bandwidth monitoring spots sharp jumps by IP, ASN, or path 3. Map bursts to log timestamps and run traffic analysis on bytes sent rather than just hits 3. Sort top IPs by bytes transferred, then review their request paths 3. One site owner reported that GPTBot consumed 30TB of bandwidth in a single month 22.

404 Error Rate Analysis

High 404 volumes from small source counts indicate dictionary-style attacks that map site structure 23. Hundreds or thousands of "Not Found" responses in quick succession suggest that bots map hidden directories rather than behave like human visitors 23. Error distribution charts that track 404 or 500 errors over time simplify monitoring 14.

Technical Defense and Control Mechanisms

Controlling AI web crawler access requires layered implementation across protocol, application, and infrastructure levels. Each defense mechanism addresses different attack vectors.

robots.txt Configuration Examples

The robots.txt file communicates crawl priorities to compliant bots 24. Place this file at your root directory (https://example.com/robots.txt). You can block GPTBot while allowing search crawlers:

User-agent: GPTBot

Disallow: /

User-agent: CCBot

Disallow: /

Selective directory control permits specific paths:

User-agent: GPTBot

Allow: /public/

Allow: /blog/

Disallow: /

It's worth mentioning that robots.txt relies on voluntary compliance 24. Compliant bots obey these directives, but others ignore them 25.

Rate Limiting Implementation

Rate limiting caps request frequency from individual IP addresses 26. Standard users usually get 60 requests per minute, while datacenter IPs get restricted to 10 requests per minute 27. Servers return 429 status codes when limits are exceeded 28. Implement exponential backoff: wait 1 second, then 2, then 4 before retrying 28.

Firewall Rules for Crawler Blocking

Apache servers use .htaccess rules to block user-agents 29:

RewriteCond %{HTTP_USER_AGENT} GPTBot [NC]

RewriteRule .* - [F,L]

IP-based blocking uses verified ranges from https://openai.com/gptbot.json 29.

Edge Platform Enforcement Methods

Cloudflare prepends managed robots.txt directives before existing files 30. Fastly Bot Management classifies traffic at the network edge and serves only legitimate requests to reduce origin costs 31.

Conclusion

AI crawlers like GPTBot and CCBot operate through systematic technical processes: DNS resolution, HTTP request construction, content parsing, link discovery, and structured data storage. These bots identify themselves through user-agent strings and IP ranges. Compliance with robots.txt varies by a lot though. ChatGPT-User no longer respects traditional crawl directives, while GPTBot and CCBot maintain protocol adherence.

Monitor your server logs and track bandwidth consumption patterns. Implement layered defense strategies that combine robots.txt directives with rate limiting and firewall rules. Understanding these technical mechanisms enables you to make informed decisions about protecting your content while balancing discoverability needs.

FAQs

Q1. Should I block AI crawlers like GPTBot and CCBot or allow them to access my site?

The decision depends on your content strategy and business model. If you run a content site where traffic equals revenue through ads or affiliates, blocking AI crawlers may protect your value since AI summaries can reduce click-throughs. However, for brand-building or thought leadership, allowing certain crawlers can increase visibility in AI-powered search results. Consider differentiating between AI search bots (like PerplexityBot) that provide source citations and training crawlers (like CCBot) that offer no referral traffic.

Q2. How can I identify which AI crawlers are accessing my website?

You can identify AI crawlers by examining your server access logs for specific user-agent strings. GPTBot appears as "Mozilla/5.0 AppleWebKit/537.36 (compatible; GPTBot/1.0; +https://openai.com/gptbot)" while CCBot shows "CCBot/2.0 (https://commoncrawl.org/faq/)". However, user-agent strings alone aren't fully reliable since they can be spoofed. For verification, cross-check IP addresses against officially published ranges from crawler operators.

Q3. Does blocking AI training bots affect my visibility in AI-powered search results?

Blocking AI model training bots (like GPTBot or Google-Extended) does not typically affect how often you appear in AI search results or real-time AI assistant responses. These are separate systems: training bots build foundational models, while search bots and assistant bots handle live queries and indexing for answer generation. You can block training crawlers while still allowing search-focused bots to maintain visibility.

Q4. How much traffic do AI search engines like Perplexity and ChatGPT actually send to websites?

Currently, referral traffic from AI search engines represents a small fraction of total traffic, typically less than 1-2% for most sites. Traditional search engines like Google still dominate by a significant margin. However, AI platforms may generate more "chat appearances" than actual click-throughs, with conversion rates around 7% from chat mentions to actual site visits. The traffic is growing but remains minimal compared to conventional search.

Q5. What's the most effective way to control AI crawler access to my site?

Implement a layered defense strategy combining multiple methods. Use robots.txt directives to communicate preferences to compliant bots, configure rate limiting to cap request frequency from individual IPs, and set up firewall rules to block specific user-agents or IP ranges. For more advanced control, edge platforms like Cloudflare can enforce rules at the network level. Remember that robots.txt relies on voluntary compliance, so combine it with technical enforcement mechanisms for comprehensive protection.

References

[1] - https://commoncrawl.org/faq

[2] - https://commoncrawl.org/ccbot

[3] - https://www.seohero.io/how-to-use-server-logs-to-identify-ai-crawler-behavior/

[4] - https://commoncrawl.org/about

[5] - https://botdetector.io/bots/ccbot/

[6] - https://developers.openai.com/api/docs/bots

[7] - https://blog.cloudflare.com/from-googlebot-to-gptbot-whos-crawling-your-site-in-2025/

[8] - https://ppc.land/openai-revises-chatgpt-crawler-documentation-with-significant-policy-changes/

[9] - https://commoncrawl.org/overview

[10] - https://en.wikipedia.org/wiki/Common_Crawl

[12] - https://commoncrawl.org/get-started

[13] - https://commoncrawl.org/blog/navigating-the-warc-file-format

[14] - https://searchengineland.com/server-access-logs-seo-448131

[15] - https://docs.oracle.com/cd/E26592_01/doc.1122/e23427/crawler007.htm

[16] - https://www.hostarmada.com/blog/crawler-log-monitoring-alerts/

[17] - https://searchengineland.com/guide/log-file-analysis

[18] - https://www.botify.com/blog/tracking-ai-bots-with-log-file-analysis

[19] - https://stackoverflow.com/questions/10995804/identify-crawlers-from-user-agent

[20] - https://www.searchenginejournal.com/ai-crawler-user-agents-list/558130/

[21] - https://developers.google.com/crawling/docs/crawlers-fetchers/google-common-crawlers

[22] - https://www.inmotionhosting.com/blog/ai-crawlers-slowing-down-your-website/

[23] - https://www.parcelperform.com/insights/ecommerce-infrastructure-mapping-bots

[24] - https://developers.google.com/search/docs/crawling-indexing/robots/intro

[25] - https://www.conversios.io/blog/bot-traffic-filtering-and-prevention-guide-for-ga4-and-google-ads/

[26] - https://www.cloudflare.com/learning/bots/what-is-rate-limiting/

[27] - https://ipasis.com/blog/detect-block-bot-traffic-edge

[29] - https://www.playwire.com/blog/the-complete-list-of-ai-crawlers-and-how-to-block-each-one

[30] - https://developers.cloudflare.com/bots/additional-configurations/managed-robots-txt/